What Does ChatGPT Stand For? The Full Meaning Explained (2026)

ChatGPT stands for Chat Generative Pre-trained Transformer. Learn what each word means, how GPT technology works, and how ChatGPT compares to other AI models.

ChatGPT is one of the most widely used AI tools on the planet - with over 900 million weekly active users as of February 2026 (TechCrunch) - but most people who use it daily have no idea what the name actually means. If you have ever wondered what does ChatGPT stand for, you are not alone. The name is more than a brand - it describes exactly how the technology works.

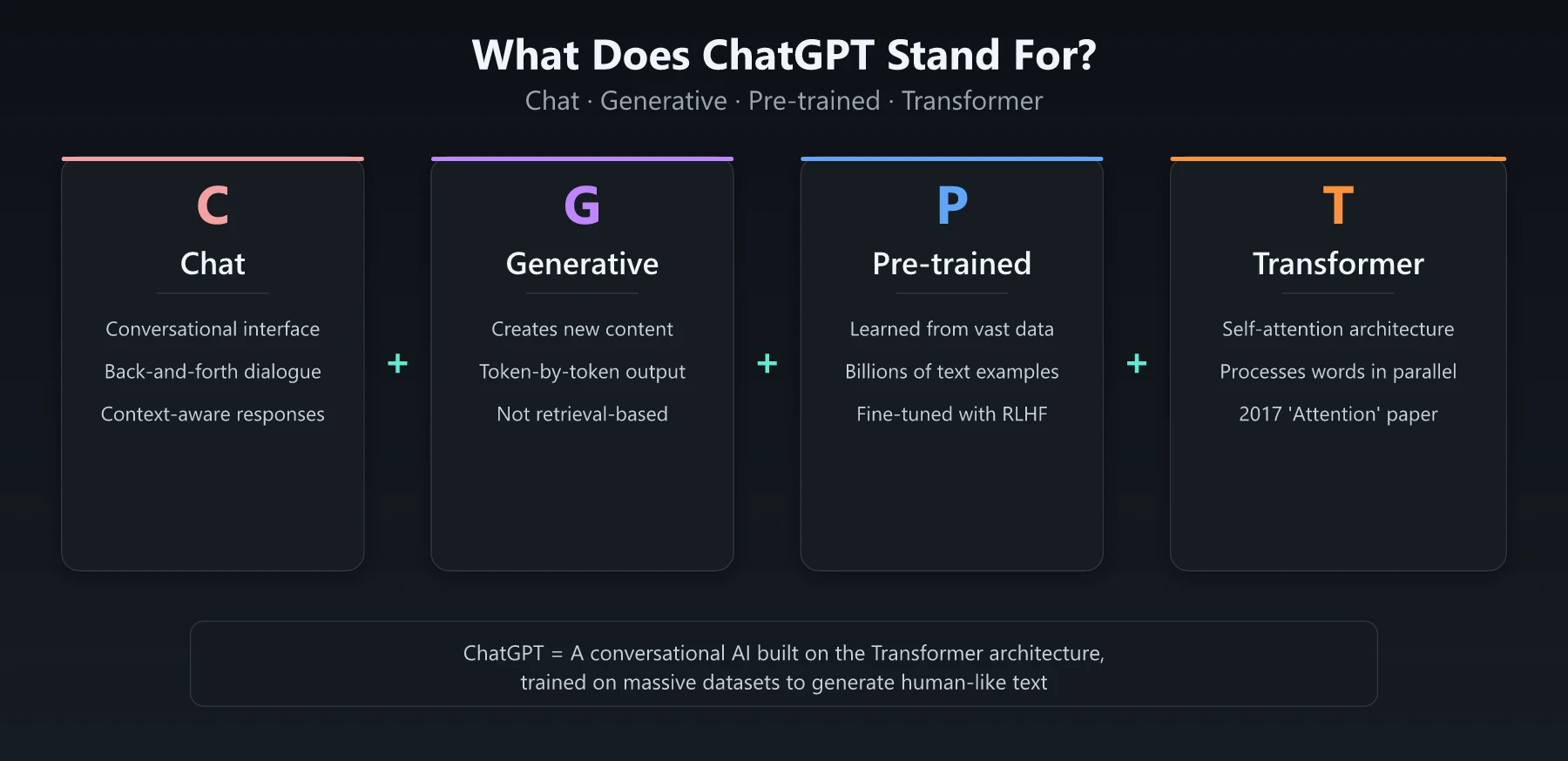

ChatGPT stands for Chat Generative Pre-trained Transformer. Each word in that name points to a specific piece of the technology behind the tool. Understanding what those words mean gives you a clearer picture of what ChatGPT can and cannot do - and why it works the way it does.

What Does ChatGPT Stand For? Breaking Down Each Word

The name ChatGPT is an acronym with four parts. Here is what each one means in plain language.

Chat: The Conversational Interface

The “Chat” part is the simplest to understand. It means you interact with the AI through conversation - typing messages and receiving responses, back and forth. Before ChatGPT, most AI language models were accessed through APIs or research interfaces. OpenAI’s decision to wrap the GPT model in a chat interface is what made it accessible to hundreds of millions of people - the product reached 100 million monthly active users in just two months after launch, making it the fastest-growing consumer app in internet history at the time, according to a UBS study citing Similarweb data.

The chat format also means the model keeps track of context within a conversation. It remembers what you said three messages ago and can build on it. This is different from a simple text-completion tool that treats every input as a fresh start.

Generative: Creating New Content

“Generative” means the model creates new text rather than retrieving existing text from a database. When you ask ChatGPT a question, it is not looking up an answer from a stored index. It is generating a response word by word (technically, token by token) based on patterns it learned during training.

This is the core of what makes generative AI different from traditional search engines. A search engine finds and ranks existing pages. A generative model produces original text that did not exist before your query.

The generative capability is also why ChatGPT can write essays, draft code, translate languages, and produce creative fiction. It is not limited to factual retrieval - it generates language across any domain it was trained on.

Pre-trained: Learning From Data

“Pre-trained” refers to how the model was built. Before ChatGPT ever answered a single user question, it went through a massive training process on large datasets of text from the internet - books, articles, websites, code repositories, and more. For context, GPT-3 alone was trained on roughly 500 billion tokens gathered from sources including Common Crawl, WebText2, two book corpora, and Wikipedia (arXiv).

This pre-training phase is what gives the model its general knowledge. The model learned grammar, facts, reasoning patterns, coding syntax, and conversational norms all from analyzing billions of text examples.

After pre-training, the model goes through additional fine-tuning stages where human trainers provide feedback to make the responses more helpful and safer. But the foundation - the broad knowledge base - comes from that initial pre-training step.

Transformer: The Architecture

“Transformer” is the technical architecture that makes everything else possible. Introduced in a landmark 2017 paper titled “Attention Is All You Need” by researchers at Google, the Transformer architecture changed how machines process language. The paper has since been cited more than 173,000 times, placing it among the top ten most-cited papers of the 21st century (Wikipedia).

Before Transformers, language models processed text sequentially - one word at a time, left to right. Transformers introduced a mechanism called self-attention that lets the model look at all words in a sentence simultaneously and understand how they relate to each other.

Think of it this way: if you read the sentence “The bank by the river was covered in moss,” you instantly know “bank” means a riverbank, not a financial institution. Transformers replicate that kind of contextual understanding by weighing the relationships between every word in the input.

This architecture is what makes modern large language models fast enough and accurate enough to be useful. Nearly every major AI language model today - including Claude, Gemini, and Llama - uses the Transformer architecture or a variant of it.

A Brief History of ChatGPT and GPT Models

Understanding what does ChatGPT stand for also means understanding where it came from. OpenAI has released several generations of GPT models, each significantly more capable than the last.

| Model | Release | Key Milestone |

|---|---|---|

| GPT-1 | June 2018 | Proved pre-training + fine-tuning worked for language tasks. 117 million parameters. |

| GPT-2 | February 2019 | Generated coherent multi-paragraph text. 1.5 billion parameters. OpenAI initially withheld the full model over misuse concerns. |

| GPT-3 | June 2020 | 175 billion parameters - 10x more than any previous non-sparse language model. Demonstrated few-shot learning - solving tasks with minimal examples. Opened API access. |

| GPT-3.5 | November 2022 | Powered the launch of ChatGPT. Fine-tuned with RLHF for conversational use. |

| GPT-4 | March 2023 | Multimodal (text + image input). Significantly better reasoning, coding, and accuracy. |

| GPT-4o | May 2024 | ”Omni” model handling text, vision, and audio natively. Faster and cheaper than GPT-4. |

| GPT-4.5 | February 2025 | Larger model focused on improved “EQ” - better at understanding nuance and reducing hallucinations. |

| o1 / o3 | 2024-2025 | Reasoning-focused models using chain-of-thought at inference time for complex problem-solving. |

The jump from GPT-3 to GPT-3.5 is where ChatGPT was born. OpenAI took the base GPT-3.5 model and applied Reinforcement Learning from Human Feedback (RLHF) to make it conversational, helpful, and safer. That fine-tuned version became the ChatGPT product that launched in November 2022 - and the growth since then has been staggering. ChatGPT went from 200 million to 400 million weekly active users in under six months between August 2024 and early 2025 (TechCrunch), then hit 800 million by October 2025 (TechCrunch). OpenAI is now valued at $852 billion after closing a record $122 billion funding round in March 2026 (TechCrunch).

How ChatGPT Actually Works

Now that you know what ChatGPT stands for, here is a simplified look at how it operates under the hood.

Step 1: Tokenization

When you type a message, ChatGPT breaks your input into tokens - small chunks of text that are roughly 3-4 characters long. The word “marketing” might become two tokens: “market” and “ing.” The model works with tokens, not whole words.

Step 2: Self-Attention

The Transformer architecture processes all tokens at once through layers of self-attention. Each layer helps the model figure out which parts of your input are most relevant to each other. This is how it understands context, pronouns, and implied meaning.

Step 3: Prediction

The model generates its response one token at a time, predicting the most likely next token based on everything that came before it. Each prediction is informed by the full context of your conversation - your message, the chat history, and the system prompt.

Step 4: RLHF Safety Layer

Raw GPT models can produce harmful, biased, or unhelpful content. RLHF is the process that fixes this. Human trainers ranked different model responses during training, and the model learned to prefer responses that humans rated as helpful, accurate, and safe.

This is why ChatGPT sometimes refuses certain requests or adds caveats to its responses. The RLHF training taught it to be cautious in specific situations.

If you want to get better results from this process, mastering your prompts makes a significant difference. Check out our guide to ChatGPT prompts for marketing for templates that work with how the model actually processes language.

ChatGPT vs Other AI Models

ChatGPT is not the only AI model built on the Transformer architecture. Here is how it compares to the other major options available in 2026.

| Feature | ChatGPT (OpenAI) | Claude (Anthropic) | Gemini (Google) | Grok (xAI) |

|---|---|---|---|---|

| Architecture | Transformer (GPT-4o / o3) | Transformer | Transformer (MoE variant) | Transformer |

| Free tier | Yes (GPT-4o limited) | Yes (limited) | Yes (Gemini 1.5 Flash) | Yes (with X account) |

| Max context window | 128K tokens | 200K tokens (1M extended) | 1M+ tokens | 128K tokens |

| Multimodal | Text, image, audio, video | Text, image | Text, image, audio, video | Text, image |

| Web browsing | Yes | Yes | Yes (grounded in Search) | Yes (real-time X data) |

| Code execution | Yes (built-in sandbox) | Yes (tool use) | Yes | Yes |

| Best for | General-purpose, plugins ecosystem | Long documents, careful analysis | Google Workspace integration | Real-time social data |

All of these models share the same core architecture - the Transformer - but they differ in training data, fine-tuning approach, safety philosophy, and product features. The “GPT” in ChatGPT refers specifically to OpenAI’s family of models, but the underlying Transformer concept is industry-wide. The commercial stakes are enormous: OpenAI hit $10 billion in annualized recurring revenue by mid-2025 (TechCrunch), fueled primarily by ChatGPT subscriptions and API usage.

For a broader look at how AI tools fit into professional work, see our comparison of free AI tools for marketing.

How Marketers and Professionals Use ChatGPT

Knowing what ChatGPT stands for is useful, but knowing how to use it effectively is what matters. The adoption numbers speak for themselves: over 92% of Fortune 500 companies are building on OpenAI’s products (OpenAI), and OpenAI surpassed 1 million business customers in November 2025 (OpenAI). The platform also has over 50 million paying subscribers (TechCrunch). Here are the primary ways professionals put it to work.

Content Creation and Editing

ChatGPT can draft blog posts, email copy, social media captions, ad copy, and product descriptions. The key is treating its output as a first draft, not a finished product. Edit for your brand voice, verify facts, and add original insights. The generative nature of the model means it produces plausible text - but plausible is not the same as accurate.

Research and Summarization

The model excels at synthesizing information across topics. Feed it a long report and ask for a summary. Give it three competitor product pages and ask for a comparison table. These tasks play to the Transformer’s strength of understanding relationships between large amounts of text.

Strategy and Brainstorming

ChatGPT is effective as a thinking partner. Use it to pressure-test your positioning, generate campaign concepts, or identify gaps in a go-to-market plan. The model has seen enough business content to provide useful frameworks - just do not outsource your final strategic decisions to it.

Coding and Automation

From writing Python scripts to building Excel formulas to creating SQL queries, ChatGPT helps professionals automate repetitive technical tasks. The pre-training on code repositories means it handles most common programming languages competently.

Curious about whether AI will take over entire marketing roles? The answer is more nuanced than you might expect. Read will marketing be replaced by AI for a grounded take.

What ChatGPT Cannot Do

Understanding the name also means understanding the limits.

It does not know current events by default. The pre-trained knowledge has a cutoff date. Web browsing features help, but the base model is not aware of what happened yesterday unless it searches for it.

It generates plausible text, not verified truth. The generative process optimizes for language patterns, not factual accuracy. Always fact-check critical information from ChatGPT against primary sources.

It does not truly “understand” anything. The Transformer architecture is sophisticated pattern matching at scale. ChatGPT does not have beliefs, intentions, or understanding in the human sense. It predicts tokens. The results are often impressive, but the mechanism is statistical, not cognitive.

Conclusion: What Does ChatGPT Stand For and Why It Matters

ChatGPT stands for Chat Generative Pre-trained Transformer - a name that describes exactly what the technology does. “Chat” is the interface. “Generative” is the capability. “Pre-trained” is the method. “Transformer” is the engine.

Understanding what each part means helps you use the tool more effectively. When you know that responses are generated (not retrieved), you know to fact-check. When you understand pre-training, you understand why the model has knowledge gaps. When you grasp how Transformers work, you understand why context and clear prompting produce better results.

ChatGPT is a powerful tool, but it is a tool. The professionals who get the most from it are the ones who understand what it actually is - and what it is not. Start by refining your prompts with our ChatGPT prompts for marketing guide, and build from there.

Frequently Asked Questions

What does ChatGPT stand for?

ChatGPT stands for Chat Generative Pre-trained Transformer. 'Chat' refers to its conversational interface, 'Generative' means it creates new text, 'Pre-trained' means it learned from large datasets before deployment, and 'Transformer' is the neural network architecture it uses.

Who created ChatGPT?

ChatGPT was created by OpenAI, an AI research company co-founded in 2015. OpenAI launched ChatGPT in November 2022, and it became the fastest-growing consumer application at the time.

What is the difference between GPT and ChatGPT?

GPT is the underlying language model technology. ChatGPT is a specific product built on top of GPT that adds a conversational interface, safety tuning through RLHF, and features like memory, web browsing, and code execution.