LLM Optimization: How to Get Cited by ChatGPT, Perplexity, and Gemini (2026)

LLM optimization is how you earn citations in ChatGPT, Perplexity, Claude, and Gemini answers. Signals that matter, structure they reward, 2026 checklist.

Sam Altman announced at OpenAI’s October 2025 Dev Day that ChatGPT had crossed 800 million weekly active users. A growing share of those users now research, compare, and shortlist products inside the chat - and when they ask ChatGPT, Perplexity, Claude, or Gemini for a recommendation, the answer cites a handful of sources, not ten blue links. LLM optimization is the practice of being one of those citations.

Below: what LLM optimization is, how it differs from traditional SEO and AEO, the four signal layers that matter, and a 2026 audit checklist you can run on any page in 30 minutes.

What Is LLM Optimization?

LLM optimization is the practice of structuring web content so that large language models retrieve, parse, and cite it inside generated answers. The models in scope:

- ChatGPT (chatgpt.com, ChatGPT Search)

- Perplexity (perplexity.ai)

- Google Gemini and AI Overviews

- Claude (claude.ai with web search)

- Microsoft Copilot (Bing-grounded)

Each model has its own retrieval pipeline. Some use partner search indexes (Bing, Google). Some use proprietary crawls. Most use a hybrid. The shared output is the same - a generated answer with inline citations. LLM optimization is how your URL becomes one of those citations.

It is the technical evolution of AEO vs SEO. Where AEO focused on featured snippets and voice answers, LLM optimization adds the layer of being chunkable, attributable, and topically deep enough to be the source the model trusts.

How LLM Optimization Differs from SEO and AEO

Three disciplines overlap but optimize for different outputs.

| Discipline | Optimizes for | Primary metric |

|---|---|---|

| SEO | Ten blue links and a click | Organic traffic, rankings |

| AEO | Featured snippets, voice answers, AI Overview citations | Snippet ownership, AI Overview presence |

| LLM optimization | Citations inside ChatGPT, Perplexity, Claude, Gemini answers | Referral traffic from AI sources, citation share of voice |

The on-page work overlaps heavily. The difference is that LLMs reward structure and clarity that traditional rankings tolerate but do not require. A page that ranks #4 in Google can still be the only one ChatGPT cites - because it answered the question more cleanly.

For the AEO foundation, see AEO vs SEO and AI Overview Optimization.

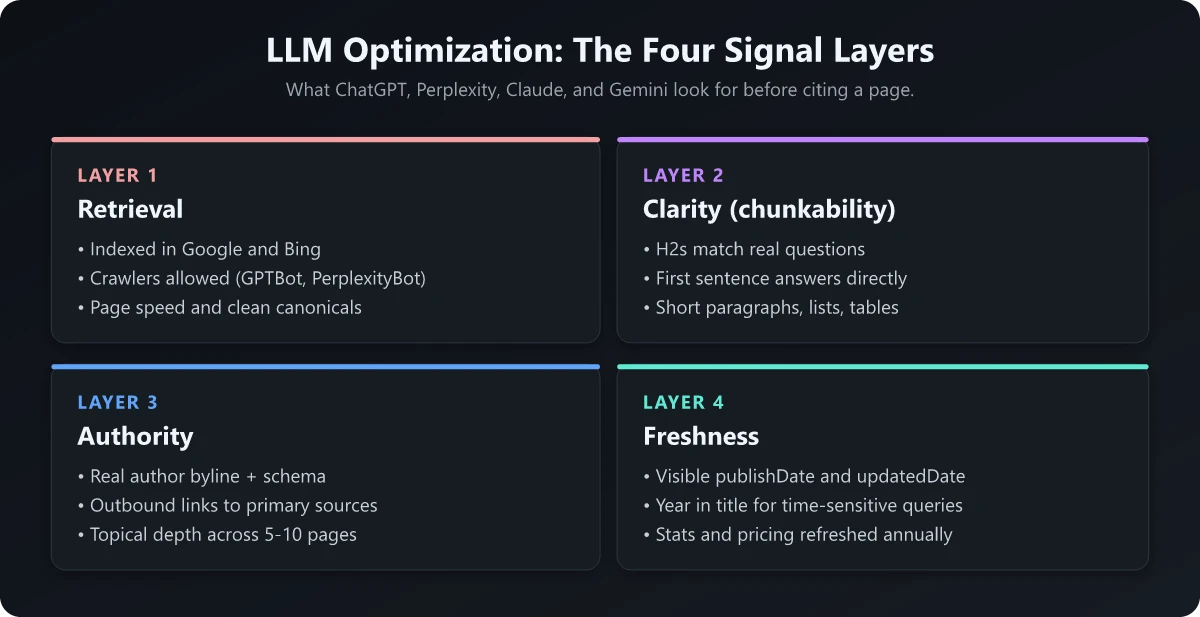

The Four Signal Layers LLMs Care About

After auditing dozens of pages that get cited consistently, the same four signal layers show up.

Layer 1: Retrieval

The model has to find the page first. This is where SEO still does most of the work.

- Index in Google and Bing (most LLMs use one or both as their retrieval substrate)

- Ship a clean XML sitemap and robots.txt

- Allow the relevant LLM crawlers (

GPTBot,PerplexityBot,ClaudeBot,Google-Extended) - or block them deliberately if you have a reason - Maintain page speed; slow pages get deprioritized in retrieval at scale

- Use canonical tags and avoid thin duplicates

A page that does not rank in the top 20 organic results for a topic almost never gets retrieved by an LLM for that topic. Fix retrieval before fixing anything else.

Layer 2: Clarity (chunkability)

LLMs do not read pages the way humans do. They chunk content into short token segments (typically a few hundred tokens), embed them, and retrieve the chunk most relevant to the query. The page structure has to support that.

- Lead each H2 with the question or claim, not a clever headline

- Use short paragraphs (2-4 sentences) and bulleted lists for structured information

- Put the answer to a question in the first sentence under the relevant heading

- Avoid burying claims in long narrative paragraphs

- Use comparison tables for “X vs Y” content - LLMs parse tables cleanly

- Keep sentence structure simple. One claim per sentence beats a complex multi-clause one.

The “lead with the answer” pattern is the single highest-ROI structural change. A page that buries the answer in paragraph four is harder to chunk than one that opens with it.

Layer 3: Authority

Once a chunk is retrieved, the model decides whether to cite it. The authority signals look like SEO authority signals with two extras.

- Author byline with a real name, role, and link to a profile or LinkedIn

- Primary-source citations linking out to research, tool docs, or original reports - LLMs reward pages that themselves cite well

- Topical depth - the model looks at whether your domain has 5-10 related pages, not just one

- Schema markup -

Article,FAQPage,HowTo,BreadcrumbList,Organizationall help retrieval and citation logic - External mentions - inbound links and brand mentions still count, exactly like classic SEO

The authority test: would a reasonable buyer accept this page as a credible source? If the answer is “depends,” the page is not yet citation-grade.

Layer 4: Freshness

LLMs rank dated content lower for time-sensitive queries.

- Show a

publishDatealways, and anupdatedDatewhenever content is meaningfully revised - Expose both as

datePublishedanddateModifiedinArticleschema, even when they are the same - Refresh stats, pricing, and product mentions at least once a year

- Note the year in the title for queries where freshness matters (“(2026)”)

A two-year-old page with the right structure can still get cited. A two-year-old page with stale stats and no update marker rarely will.

On-Page Structure That Earns Citations

The pages that get cited most often share a structural pattern. None of it is novel - it is just executed deliberately.

| Element | Why LLMs reward it |

|---|---|

| Question-style H2s | Match the user’s query directly; chunk cleanly |

| First-sentence answer | Lets the model lift the chunk without rewriting |

| Comparison tables | Parse cleanly; rare in pure text content |

| FAQ schema | Gives the model a labeled Q&A block to lift |

| Internal links to topical siblings | Reinforces topical authority |

| Outbound primary-source links | Signals you are not the source of last resort |

| Code blocks and example snippets | Indicate concreteness for technical queries |

The combined effect: a page that reads cleanly to a human, parses cleanly to a model, and links cleanly to other pages on your domain that cover adjacent questions.

llms.txt and Robots Configuration

A modern site treats LLMs as a first-class crawler population. Two files matter.

llms.txt

A proposed standard (see llmstxt.org) that publishes a markdown index of your highest-value content for LLMs to ingest. The format is simple - a markdown file with sections of links and one-line descriptions. swapbiswas.com publishes one at the root, plus a llms-full.txt with full content.

Adoption across models is partial. The cost of adding one is near-zero, so the asymmetric bet says ship it.

robots.txt for LLM crawlers

Each major LLM operator publishes one or more named crawlers. They split into three roles - training, search retrieval, and user-initiated fetches - and each can be allowed or blocked independently. The verified set as of 2026:

# OpenAI - GPTBot is for training; OAI-SearchBot powers ChatGPT search;

# ChatGPT-User fetches pages when a user clicks or asks during a chat.

User-agent: GPTBot

Allow: /

User-agent: OAI-SearchBot

Allow: /

User-agent: ChatGPT-User

Allow: /

# Anthropic - ClaudeBot is for training; Claude-SearchBot powers search

# relevance; Claude-User handles in-chat user fetches.

User-agent: ClaudeBot

Allow: /

User-agent: Claude-SearchBot

Allow: /

User-agent: Claude-User

Allow: /

# Perplexity - PerplexityBot indexes for search; Perplexity-User handles

# user-initiated visits inside the Perplexity product.

User-agent: PerplexityBot

Allow: /

User-agent: Perplexity-User

Allow: /

# Google-Extended controls whether your content trains Gemini and Vertex AI.

# It is separate from Googlebot, which still handles classic Google Search indexing.

User-agent: Google-Extended

Allow: /Sources for the crawler names: OpenAI, Anthropic, Perplexity, and Google’s Web Publisher Controls announcement.

Blocking these does not stop the model from generating answers about your topic - it just stops the model from citing your page. Most B2B publishers should allow them. Most paywalled or proprietary publishers should not.

A 30-Minute LLM Optimization Audit

Run this on any page where citation share matters.

| Check | Pass criteria |

|---|---|

| Page indexed in Google and Bing | site:domain.com/path returns the URL |

| H2s match real questions | Pull H2s; they read like queries a buyer would type |

| First-sentence answers under each H2 | The answer lives in the first sentence, not paragraph four |

| FAQ schema present | View source; FAQPage JSON-LD validates in Google’s tool |

| Article schema with author | Article schema includes a real author block |

| Outbound primary-source links | At least 3 links to non-affiliate primary sources |

| Internal links to siblings | At least 3 links to related pages on the same domain |

| Page renders in <2s on 4G | Lighthouse mobile performance ≥85 |

| Last updated within 12 months | Visible updatedDate if anything has changed |

| llms.txt published at root | domain.com/llms.txt returns 200 |

| Robots allow GPTBot, PerplexityBot, ClaudeBot, Google-Extended | Or blocked deliberately, with a reason |

If any check fails, fix it before publishing the next page. The compound effect of fixing them across an entire site is bigger than any single tactic.

How to Measure LLM Citations

There is no Google Search Console for LLMs yet. Three approximations:

- Referral traffic in GA4 from

chatgpt.com,perplexity.ai,gemini.google.com,claude.ai,copilot.microsoft.com. Filter by source and watch the trendline. - Direct LLM testing. Run your top 20 target queries through ChatGPT, Perplexity, and Gemini once a month. Track whether your URL is cited. A simple spreadsheet works.

- AI citation tools. Ahrefs Brand Radar, Profound, Otterly, and a small but growing list of others claim to track citations across major LLMs. Coverage is partial; treat the data as directional.

The honest answer in 2026 is that measurement lags behind the channel. Track what you can, ship structural improvements anyway, and the traffic shows up before the dashboards do.

What LLM Optimization Will Not Do

Two things to be honest about.

It will not save a thin page. LLMs reward depth. A 400-word page with the right structure still loses to a 2,000-word page with the same structure. Get the substance right first.

It will not generate clicks the way SEO does. LLMs answer the question inside the chat. Some users click through; many do not. The right way to think about LLM optimization is brand presence inside answers, not a one-to-one traffic substitute. The traffic mix shifts; the volume per citation drops.

For the broader strategy, see AI SEO Strategy and AI Future of SEO.

The Bottom Line

LLM optimization is not a separate discipline from SEO. It is the next layer on top. The retrieval signals are the same. The clarity, authority, and freshness signals get sharper. The output is no longer a click - it is a citation that earns brand presence in the answer.

The teams that win are the ones who treat LLM citations as the new home page. Every product, comparison, and how-to page becomes infrastructure for being mentioned by a model that hundreds of thousands of buyers query every day. The mechanics are unglamorous. The compounding is large.

Frequently Asked Questions

What is LLM optimization?

LLM optimization is the practice of structuring web content so it gets retrieved, parsed, and cited by large language models like ChatGPT, Perplexity, Claude, and Gemini. It overlaps with SEO and AEO but adds requirements unique to how LLMs ingest and ground their answers - chunkability, source authority, and unambiguous factual claims.

How is LLM optimization different from SEO?

SEO optimizes for ten blue links and a click. LLM optimization optimizes for a citation inside an AI-generated answer that may or may not earn the click. The on-page signals overlap heavily - clean structure, schema markup, page speed - but LLMs weight things SEO does not, like extractable Q&A blocks, primary-source claims, and topical authority across multiple pages.

Do LLMs use Google's search index?

It depends on the model. Google Gemini grounds answers using Google Search. Perplexity and ChatGPT Search use a mix of their own crawls plus partnerships. Claude can use web search via the API. The practical takeaway: rank in Google and Bing for your topic and you cover most LLM retrieval paths, but you still need LLM-specific structure to get cited once retrieved.

What is llms.txt?

llms.txt is a proposed standard that lets sites publish a markdown index of their highest-value content for LLMs to ingest. It is not yet adopted by every model, but adding one is low-cost and signals you are an LLM-aware publisher. swapbiswas.com publishes llms.txt and llms-full.txt at the root.

How do I know if my content is being cited by LLMs?

There is no Google Search Console for LLMs yet. The practical signal is referral traffic from chatgpt.com, perplexity.ai, and gemini.google.com in your analytics. A handful of tools (Ahrefs Brand Radar, Profound, Otterly) attempt to track AI citations directly. For now, treat LLM traffic as an emerging channel - measure it where you can and assume the signal is partial.