What Is Win-Loss Analysis? Templates, Questions, and Examples (2026)

Win-loss analysis explained: the interview process, the 12 questions every PMM should ask, the categorization framework, and how to turn findings into pipeline.

Gong’s 2024 research found that the average number of competitive mentions in B2B deals has increased by 57% since 2022. More competitive scrutiny means more deals decided on details only visible in hindsight - and the deals you lose teach more than the deals you win. Most companies still skip the lesson because the work is uncomfortable and the payoff lands two quarters later. Win-loss analysis is the structured way to extract it.

What this guide covers: what win-loss analysis is, the interview process that produces honest answers, the 12 questions every program should ask, the categorization framework that turns transcripts into action, and the deliverables that turn findings into pipeline.

What Is Win-Loss Analysis?

Win-loss analysis is a structured research process that interviews recently won and lost prospects to understand why the deal went the way it did. The output is a synthesized view of:

- Why you win - the strengths buyers actually pay for

- Why you lose - the gaps, mistakes, and competitor strengths that flip deals

- What changed minds - the specific moments in a sales cycle that tipped the decision

- What pricing buyers will accept - revealed-preference data, not survey claims

The findings feed into three teams. Product hears about gaps. Marketing hears how buyers describe the problem and the alternatives. Sales hears which objections recur and which battlecard answers actually land.

Done well, win-loss is the highest-ROI research program a B2B company runs. Done badly, it produces a 40-page deck nobody reads.

Why Most Companies Get Win-Loss Wrong

Three failure modes account for almost every weak win-loss program.

- Surveys instead of interviews. A buyer who fills out a form gives you check-box answers. A buyer in a 30-minute conversation gives you the real reason. Surveys are not win-loss.

- Only interviewing wins. Wins teach you confirmation bias. Losses - especially “lost to no decision” - teach you what is broken.

- The losing rep is the interviewer. The buyer will not tell the rep who lost the deal that the rep was annoying, the demo missed the point, or pricing was the problem. They will say “we went a different direction” and hang up.

A program that fixes all three of those - structured interviews, balanced sample, neutral interviewer - produces findings worth shipping.

The Win-Loss Process

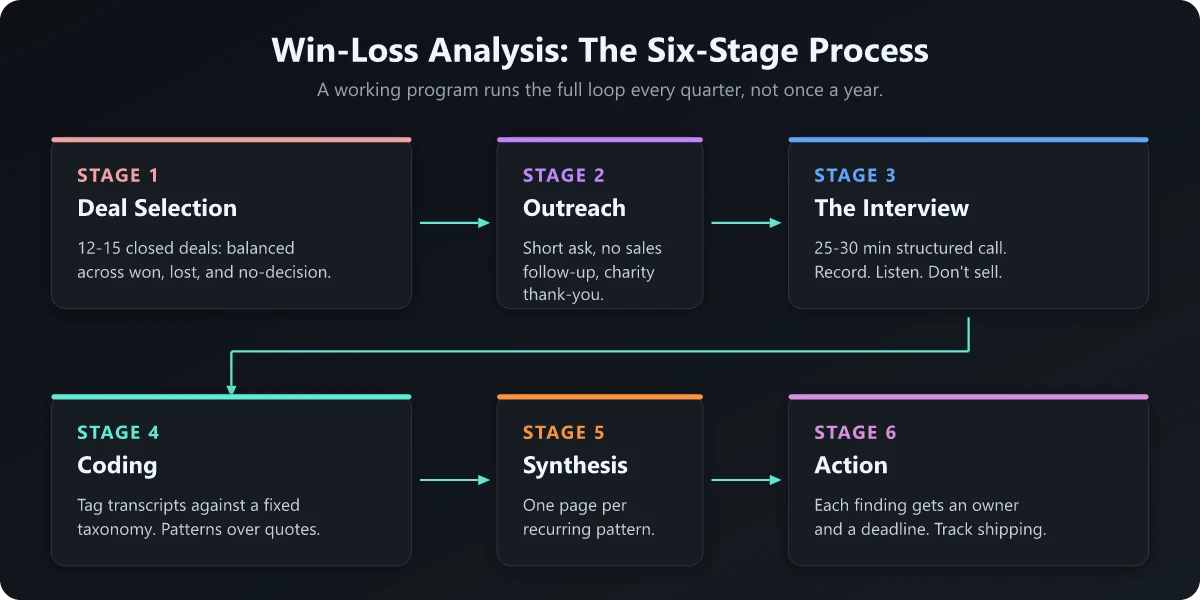

A working win-loss program has six stages. The whole loop runs every quarter.

Stage 1: Deal Selection

Pick 12-15 recently closed deals with a balanced split:

- 4-5 wins

- 4-5 losses to a named competitor

- 4-5 losses to no decision (status quo, budget cut, deferred)

Skew toward your ICP. Deals from poor-fit accounts produce noise, not signal.

Stage 2: Outreach

Email the buyer (not the champion - the actual decision maker where possible) with a low-friction request. The pitch that works:

Hi [name], we are running a quick research program with recent buyers. Would you be open to a 25-minute call with our research lead about your evaluation process? We pay $100 to a charity of your choice as a thank you. No sales follow-up.

The two non-negotiable parts: short call, no sales follow-up. The combination - low time ask, no sales angle, and a small charitable thank-you - lifts acceptance rates well above what a generic survey or “we’d love your feedback” email gets.

Stage 3: The Interview

Run the interview as a 25-30 minute structured conversation. Record (with permission) and transcribe. Use the 12-question script below. Follow up on anything the buyer dwells on.

The single rule: interviewers do not defend, sell, or correct. They listen.

Stage 4: Coding

Tag every transcript against a fixed taxonomy. A workable starting taxonomy:

| Theme | Sub-themes |

|---|---|

| Product capability | Feature gaps, integration gaps, scale limits, UX issues |

| Sales process | Discovery quality, demo fit, follow-up speed, mutual action plan |

| Pricing | Sticker price, packaging, terms, ROI clarity |

| Competitive | Competitor strength, competitor weakness, switching cost |

| Buyer process | Buying committee, internal politics, no-decision drivers |

| Brand and trust | Awareness, customer references, security/compliance |

Coding produces a count - which themes appear in 4 of 12 interviews, in 8 of 12, in 2 of 12. The pattern is what matters, not any single quote.

Stage 5: Synthesis

Roll the coded data into a one-page-per-theme summary:

- The pattern (e.g., “Lost deals consistently mention our reporting depth as inferior to Competitor X”)

- The supporting quotes (3-5)

- The recommendation (product fix, marketing reframe, sales talk track)

- The owner

Resist the urge to write a deck. Write recommendations.

Stage 6: Dissemination and Action

Share the findings with product, marketing, and sales leadership. Each team picks 1-2 items to act on this quarter. Track whether the action ships.

The program that runs all six stages once and stops produces a report nobody reads. The program that runs the loop quarterly produces compounding intelligence.

The 12 Questions Every Win-Loss Interview Should Ask

Use this script as a starting point. Adjust order and follow-ups based on the conversation.

- How did you first realize you needed a product like this? (Origin story - reveals the trigger.)

- Who was involved in the buying decision, and what role did each person play? (Buying committee.)

- What alternatives did you evaluate? (Real competitive set, not the one you assumed.)

- What were the top 3-5 criteria you used to compare options? Rank them. (Decision weights.)

- At what point in the process did you start leaning toward your eventual choice? (The tipping moment.)

- What pushed you away from the options you eliminated? (Loss reasons for everyone except the winner.)

- What surprised you - good or bad - about how we ran the process? (Sales process feedback.)

- If we had done one thing differently, would the outcome have changed? (The counterfactual.)

- How would you describe us to a peer who is in your shoes today? (Brand language - gold for messaging.)

- What should we keep doing? (Reinforcement of strengths.)

- What should we stop doing? (The hard one - reps and decks usually feature here.)

- Anything we have not asked that you think we should know? (The bonus answer is often the most useful.)

The most valuable answers come from questions 5, 7, 9, and 11. Train interviewers to slow down on those four.

Win-Loss Categorization: Patterns Worth Acting On

After 30-50 interviews, the same 6-8 patterns appear at most B2B companies. The framework below is a starter kit for what to look for.

| Pattern | What it sounds like | Fix lives in |

|---|---|---|

| Discovery shallow | ”They jumped to demo without understanding our process” | Sales (discovery script) |

| Demo missed | ”The demo was generic - it could have been any customer” | Sales engineering, demo environment |

| Pricing opacity | ”We could not figure out what we would actually pay at scale” | Pricing page, packaging |

| Reference gap | ”We could not find a customer like us in your case studies” | Customer marketing |

| Integration gap | ”We needed it to work with X and it did not” | Product roadmap |

| Champion abandoned | ”Our internal champion left and the new person started over” | Sales (multi-threading) |

| Competitor strength | ”Competitor Y has a feature we needed for our use case” | Product, battlecards |

| Status quo wins | ”The pain was not bad enough to switch this year” | Marketing (urgency frame) |

A pattern matters when it appears in 4+ of 12 interviews. Anything below that is anecdote.

Win-Loss Program Outputs

The deliverables that turn research into pipeline:

- Quarterly findings deck for executive leadership (10-15 slides max)

- Updated battlecards for the top 3-5 competitors that show up in interviews

- Refreshed positioning if buyer language has shifted (see Product Positioning and What Is Market Positioning)

- Sales talk tracks for the top 3 objections heard in losses

- Product gap list ranked by deal impact and prevalence

- A “voice of the customer” library of quotes for marketing and content teams

For the broader competitive intelligence work that win-loss feeds into, see Competitive Intelligence Analysis and Competitive Battlecard Template.

In-House vs Third-Party Win-Loss

Both work. The trade-offs:

| Dimension | In-house | Third-party |

|---|---|---|

| Honesty of answers | Lower (buyer knows the brand) | Higher (perceived neutrality) |

| Cost | Internal time only | A few thousand dollars per interview, varies by firm |

| Speed of insights | Faster, real-time access to transcripts | 4-6 week reporting cycle |

| Coding consistency | Variable | Standardized |

| Scale | Limited by team capacity | Scales with budget |

The right answer for most teams under 200 employees is hybrid - in-house for wins (where bias is less damaging) and third-party for losses (where bias destroys the data). On the third-party side: Anova Consulting Group and Clozd are dedicated win-loss research firms; Klue (which acquired DoubleCheck Research) layers win-loss onto a broader competitive-intelligence platform; and Primary Intelligence (now part of Corporate Visions, sold under the TruVoice brand) is a survey-led option.

Common Win-Loss Mistakes

The patterns that kill programs:

- Running once and stopping. Quarterly cadence or it is a one-off project, not a program.

- No baseline. Without a prior quarter to compare to, you cannot tell if your win rate is improving or just noisy.

- Findings without owners. Every recommendation needs a name and a deadline. Otherwise the deck dies in Slack.

- Treating no-decision as a win. Lost-to-no-decision is the largest loss bucket at most B2B companies. It demands its own analysis.

- Skipping the executive readout. The CEO who hears the findings live ships fixes faster than the CEO who scans the deck on a flight.

The Bottom Line

Win-loss analysis is the most leveraged research program in B2B because it changes three teams’ priorities at once. Product gets a ranked gap list. Marketing gets the language buyers actually use. Sales gets the objections that actually cost deals.

The barrier is operational, not intellectual. Most companies know they should do it. Few build the cadence. The ones that do compound their win rate quarter over quarter while their competitors guess.

Frequently Asked Questions

What is win-loss analysis?

Win-loss analysis is a structured research process that interviews recently won and lost prospects to understand why deals went the way they did. The output is a list of patterns - product gaps, sales mistakes, competitor strengths, and pricing friction - that get fed into product, marketing, and sales decisions.

Who owns win-loss analysis?

Product marketing usually owns the program. Sales contributes the deal context. Customer research, revenue ops, or a third-party analyst runs the interviews. The findings feed into product (gaps), marketing (messaging), and sales (objection handling).

How many interviews does win-loss analysis need?

A useful read needs at least 8-12 interviews per quarter, balanced across won, lost-to-competitor, and lost-to-no-decision. Patterns emerge by interview six. Programs running fewer than six interviews a quarter are too thin to act on.

Should we use a third party or do it in-house?

Third-party analysts get more honest answers - prospects say things to a stranger they will not say to the rep who lost the deal. Boutique firms typically charge a few thousand dollars per interview; pricing varies by depth, deliverable, and program length. In-house is fine if the interviewer is not the AE who worked the deal.

What questions should win-loss interviews include?

Twelve, in this order: what triggered the search for a product like this, who was on the buying committee, what alternatives they evaluated, decision criteria and weights, the moment they leaned toward a winner, the moment they leaned away from losers, what surprised them about the process, what would have changed the outcome, what they would tell a peer about you, what we should keep doing, what we should stop, and one open question.